SPEAKERS

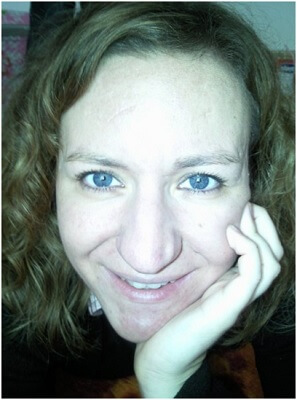

Ioanna Lekea

Assistant Professor / Hellenic Air Force Academy / Military Ethics, Philosophy of War, Applied Ethics

Title of Paper:

AI goes to war, but can we keep our promise to build ethics into automated decision-making?

Subdisciplinary Area: Military Ethics, AI, robotics, programming

Keywords: AI, automated decision-making, just war theory, international humanitarian law

Abstract: The aim of this paper is to explore the ethical and legal parameters, as well as the design and constructural demands related to the operational use of autonomous robotic systems during war. Nowadays, the possibility of a partially replacement of humans by AI systems/agents is under investigation from different scientific disciplines. In this context, the use of autonomous robotics systems in a war is expected to have significant advantages. For instance, robots are capable for rapid data processing, and quick reaction to a situation without the restrictions, that naturally, a human being would have, like lack of sleep, stress, high adrenaline and low morale. However, as we know, technology always implies perceived risks. In our case the risk is mainly focused on the cause of damage that may not be deliberate by the manufacturers, yet it remains excluded from the applicable moral and legal framework. The objective of this paper is to deal with a number of fundamental questions related to the use of AI systems, such as: what system specifications and rules should be applied in order to meet operational requirements without violating the legal framework for the conduct of war operations; how to deal with issues of responsibility/accountability/liability in relation to machine self-sufficient decision making process; and, finally, if and in which ways ethics could be programmed so as to become part of an AI mechanical system.

NEWSLETTER

Pireos str. 100, Gazi, Athens, 118 54